1. Overview

rcisignal consolidates the quality-assurance workflow

for reverse-correlation (RC) experiments into one toolkit. It addresses

three questions, in order. First, are the inputs clean (response coding,

trial counts, response bias, stimulus- pool alignment)? Second, is the

signal informative and stable (does each condition’s group CI carry more

pattern than chance, and would the pattern replicate on a different half

of the producers)? Third, when there is more than one condition, are the

conditions distinguishable, both in overall magnitude and in spatial

location?

Two halves of the package address these questions in turn. The

input-side diagnostics (run_diagnostics() and the

check_* family) cover the first question. The output-side

reliability and discriminability metrics

(run_reliability(), run_discriminability(),

infoval(), agreement_map_test(), together with

the lower-level building blocks rel_*() and

pixel_t_test()) cover the second and third.

1.1 Scope

For 2IFC stimulus generation and CI computation,

rcisignal delegates to the upstream rcicr package

(Dotsch, 2016, 2023). ci_from_responses_2ifc() is a small

convenience function around rcicr::batchGenerateCI2IFC()

that takes care of the integration quirks. Brief-RC support (Schmitz,

Rougier, & Yzerbyt, 2024) is built into rcisignal directly, because

rcicr does not yet provide it.

The metrics in this package quantify whether a CI is stable (within-condition) and separable (between-condition). Whether the CI accurately reflects the producer’s mental representation of the target trait is a separate validity question, typically addressed by an external rater study, and sits outside the package. Cone, Brown-Iannuzzi, Lei, & Dotsch (2021) showed that the standard two-phase rating design inflates Type I error; rcisignal’s metrics operate directly on producer-level pixel signal and thereby sidestep that pitfall.

The intended audience is RC researchers at an intermediate R level

with basic familiarity with the rcicr package or with the

Schmitz et al. (2024) Brief-RC structure. No prior expertise in

data.table, permutation testing, or intraclass correlation

is assumed.

1.2 Validation status

Worth flagging before any published use of this package: not all of the metrics it ships are independently validated for social-face RC data. The package is best treated as a toolbox that collects existing methods, plus a few natural extensions of those methods, into one place. Some of those extensions are mature and well-grounded in adjacent literatures; others are sensible-looking implementations that have not yet had a dedicated validation study on the kind of data this package targets. Reporting accordingly matters.

Validated in their respective domains:

-

Per-producer infoVal for 2IFC (Brinkman et al.,

2019). The z-score derivation, reference distribution, and the

z >= 1.96threshold all originate from the Brinkman et al. paper, which validated the metric on social-face 2IFC data. - Pixel-test methodology (Chauvin et al., 2005). The Welch-style per-pixel t-statistic, plus its smoothing and inferential framing, are well-established in the classification-image and neuroimaging literatures.

- Cluster-based permutation tests for FWER control (Maris & Oostenveld, 2007). Validated on EEG and MEG data, with the underlying logic carrying over to any spatial statistical map.

- Threshold-free cluster enhancement (TFCE) (Smith & Nichols, 2009). Validated on neuroimaging data; same transferability caveat as above.

Package-level extensions, not yet independently validated for social-face RC:

- Group-mean infoVal. A natural extension of per-producer infoVal to the group-average CI, with a trial-count-matched reference. Brinkman et al. (2019) recommend reporting the distribution of per-producer infoVals rather than a single group z; the group-mean version is offered here as a supplementary summary, not as a replacement for the per- producer reporting.

- Between-condition discriminability tests (cluster-based permutation and TFCE). The underlying machinery is borrowed from neuroimaging where it is well-validated; its specific behaviour on social-face CI maps (which differ from EEG/MEG or fMRI in spatial structure, signal-to-noise, and base-image artefacts) has not been the subject of a dedicated validation study.

-

Pixel-wise agreement / reliability maps

(

agreement_map_test()and the related plot helpers). Same machinery as above, applied within a single condition. Same caveat. - infoVal applied to Brief-RC. The Frobenius-norm logic transfers, and the trial-count-matched reference closes the most obvious calibration gap relative to a pool-keyed reference. The threshold conventions inherited from 2IFC have not been re-validated on Brief-RC.

If you use the unvalidated metrics in published work, please report them as exploratory and indicate the package version. If you are aware of validation studies I have missed, I would be glad to update this section (m.j.barbosa.de.oliveira@tue.nl).

2. Installation

# Latest release from GitHub.

remotes::install_github("olivethree/rcisignal",

dependencies = TRUE)

# rcicr is a Suggests dep; install it if you need the 2IFC path.

install.packages("rcicr") # CRAN

remotes::install_github("rdotsch/rcicr") # developmentThe mandatory dependencies are minimal (cli and

data.table, plus the base packages). PNG and JPEG readers

(png, jpeg), rcicr for 2IFC

pipelines, and psych for ICC cross-validation sit in

Suggests and load on demand.

rcisignal is in an experimental stage and exported

functions are still being refined. Re-running the

install_github() call above at the start of each analysis

session pulls the latest version; this user guide is kept in sync with

new and updated functions.

2.1 Quickstart with simulated data

Two helpers, simulate_2ifc_data() and

simulate_briefrc_data(), generate a complete synthetic

dataset (responses + noise pool +, for 2IFC, an

rcicr-format .Rdata) so the rest of this

vignette can be exercised without needing to bring your own files. They

are also building blocks for simulation studies (power, calibration of

reliability and discriminability metrics, sensitivity to

contamination).

What they generate

-

Responses. A long-format

data.tablewith one row per trial and the columns every diagnostic / CI function expects:participant_id,condition,trial,stimulus,response(in{-1, +1}),rt(in milliseconds). -

Noise pool. A

pixels x n_trialsnumeric matrix, generated on the fly viarcicr::generateNoisePattern()andrcicr::generateNoiseImage(). (rcicrmust be installed; with the default 256-pixel images and 500 trials the pool takes roughly one to three minutes, with acliprogress bar.) -

For 2IFC, an

.Rdatafile in the format thatrcicr::generateStimuli2IFC()writes, soci_from_responses_2ifc(),compute_infoval_summary(), and every other function that asks for anrdataargument works out of the box. -

A self-contained

$stimulilist that round-trips throughsaveRDS()/readRDS()and a$base_image_pathPNG written next to the stimuli.Rdata. The first survives session restarts when handed to a consumer viastimuli =; the second is the rcicr-style on-disk artefact for tools that expect a base-face file.

The return value is an rcisignal_sim S3 object:

sim <- simulate_2ifc_data()

str(sim, max.level = 1)

#> List of 10

#> $ data : data.table [50000 x 6]

#> $ noise_matrix : num [1:65536, 1:500] (pixels x trials)

#> $ base_face : num [1:256, 1:256]

#> $ params : num [1:500, 1:4092] (rcicr stimuli_params)

#> $ p : list of 4 (rcicr noise basis)

#> $ signal : num [1:65536] (planted signal vector)

#> $ rdata_path : chr "/tmp/.../rcisignal_sim_2ifc_stimuli.Rdata"

#> $ base_image_path : chr "/tmp/.../rcisignal_sim_2ifc_base_face.png"

#> $ stimuli : list of 11 (portable, round-trips via saveRDS)

#> $ meta : list (seed, elapsed, etc.)Defaults at a glance

| Argument | Default | Notes |

|---|---|---|

n_per_condition |

50 |

participants per condition |

conditions |

c("target", "control") |

any character vector works |

n_trials |

500 |

per participant; equals noise pool size for 2IFC |

images_per_trial (Brief-RC only) |

12 |

= 6 original/inverted pairs |

noise_pool_size (Brief-RC only) |

n_trials * (images_per_trial / 2) |

shared across participants |

img_size |

256 |

pixels; matches the bundled base face |

base_image |

inst/extdata/sim_base_face.png |

a 256x256 grayscale artificial face; pass a path or matrix to override |

signal_strength |

"weak" |

also "none" (true null), "strong", or a

numeric coefficient |

signal_region |

"eyes" |

any region accepted by make_face_mask()

|

rt_contamination_fast / _slow

|

0.02 / 0.02

|

fraction of trials replaced by uniform-fast (50-200 ms) / uniform-slow (5000-20000 ms) responses |

noise_type, nscales,

sigma

|

"sinusoid", 5, 25

|

forwarded to rcicr::generateNoisePattern()

|

rdata_dir |

NULL |

optional directory for a stable-path stimuli .Rdata;

pass to keep the sim usable across R sessions |

seed |

NULL |

a random seed is drawn and stored on the result |

progress |

TRUE |

shows a cli progress bar during noise generation |

Signal model

Each trial’s response is drawn from a logistic / softmax model whose

location depends on a planted pixel-level signal s (the

binary mask returned by make_face_mask() for the chosen

signal_region).

-

2IFC. On each trial

tthe participant sees image_a = base + noise[t] and image_b = base - noise[t] and chooses one. The log-odds of choosing image_a (response =+1) arebeta * (noise[, t] %*% s) / sqrt(sum(s)). Withsignal_strength = "none"(beta = 0), choices are uniform random; with"weak"(beta = 0.5) the planted region biases responses just enough that a 50 x 2 x 500 dataset yields a recognisable cluster on the eyes region;"strong"(beta = 2) produces a much sharper signal. -

Brief-RC. Each trial shows

images_per_trial = 2kimages (the original and inverted versions ofkdistinct noise patterns drawn from the shared pool). Each image gets a Gumbel-perturbed utility±beta * (noise %*% s) / sqrt(sum(s)) + Gumbel(0,1)and the participant picks the argmax (multinomial-logit / softmax). The recordedstimulusis the pool index of the chosen pair;responseis+1if the original version of that pair was chosen,-1if the inverted version.

A weak signal is the default rather than "none" so the

worked example produces a recognisable CI on the planted region rather

than a flat null result. Pass signal_strength = "none" to

get truly bogus data (useful for testing the diagnostic side,

calibrating null distributions, or stress-testing the reliability /

cluster permutation code under no-signal conditions).

Response-time model

RTs follow a shifted lognormal

(rt = round(exp(rnorm(n, log(800), 0.5)) + 150), in

milliseconds) with two contaminant streams:

-

Fast contaminants at

rt_contamination_fast(default 2%): uniform[50, 200]ms, mimicking accidental clicks. -

Slow contaminants at

rt_contamination_slow(default 2%): uniform[5000, 20000]ms, mimicking distraction or task pauses.

These are deliberately tuned so that check_rt() finds

something to flag (useful for sanity-checking the RT diagnostic without

curating real outliers by hand).

End-to-end demo (2IFC)

Pasting the chunk below into a fresh R session takes you from no data at all to a within-condition reliability summary:

sim <- simulate_2ifc_data(

n_per_condition = 30, # smaller for a quick demo

n_trials = 200,

signal_strength = "weak",

seed = 1

)

# Step 1: run the diagnostic battery on the simulated responses.

# Pass the simulator's rdata so rdata-dependent sub-checks (response

# inversion, infoval consistency) run too. `stimuli = sim$stimuli`

# is an in-memory equivalent.

print(run_diagnostics(sim$data, method = "2ifc",

rdata = sim$rdata_path, col_rt = "rt"))

# Step 2: compute per-participant CIs using the bundled .Rdata.

target_rows <- subset(sim$data, condition == "target")

control_rows <- subset(sim$data, condition == "control")

cis_target <- ci_from_responses_2ifc(target_rows,

rdata_path = sim$rdata_path)

cis_control <- ci_from_responses_2ifc(control_rows,

rdata_path = sim$rdata_path)

# Step 3: within-condition reliability.

print(run_reliability(cis_target$signal_matrix, seed = 1))

print(run_reliability(cis_control$signal_matrix, seed = 1))

# Step 4: between-condition cluster test.

print(run_discriminability(

signal_matrix_a = cis_target$signal_matrix,

signal_matrix_b = cis_control$signal_matrix,

seed = 1

))For the Brief-RC pipeline the equivalent demo replaces

simulate_2ifc_data() with

simulate_briefrc_data() and

ci_from_responses_2ifc() with

ci_from_responses_briefrc(). The Brief-RC consumer reads

the noise matrix directly, so the call becomes

ci_from_responses_briefrc(sim$data, noise_matrix = sim$noise_matrix);

add base_image = sim$base_face if you also want the

rendered visualisation (scaling = "matched").

A note on speed

Noise generation is the dominant cost (about 0.4-0.5 s per trial at

256 pixels with default basis settings, in pure R). With default

parameters expect roughly 1-3 minutes per call. A future release may

provide an Rcpp accelerator; for now the function is

intentionally single-shot (“generate once, reuse the

rcisignal_sim object across many analyses”).

To pay the cost only once across R sessions (saveRDS() /

readRDS(), knitr cache = TRUE, sharing with a

collaborator), use one of two portable routes. Pass

rdata_dir = "simdata/" to the simulator so the stimuli

.Rdata keeps a stable path, or hand

stimuli = sim$stimuli to the consumer in place of

rdata_path = sim$rdata_path. The $stimuli list

is self-contained and survives session restarts even after the

.Rdata file is gone.

3. Signal matrix

Almost every analytical function in rcisignal operates

on a single data structure: a signal matrix with one

row per pixel and one column per producer (participant). The two

top-level functions run_reliability() and

run_discriminability() take a signal matrix as input, and

so do the lower-level rel_split_half(),

rel_icc(), rel_loo(),

pixel_t_test(), rel_cluster_test(),

rel_dissimilarity(), infoval(), and

agreement_map_test(). Once you have the signal matrix in

the right shape, the rest of the analysis follows.

3.0 Three pixel matrices that all sound similar: keep them apart

Reverse correlation work involves several types of pixel matrices

that may be easy to confuse. In rcisignal, each one has

exactly one job:

| Data type | What is it? | shape | Where it comes from |

|---|---|---|---|

noise_matrix |

input pool of noise patterns the experiment chose stimuli from. One column per pre-generated noise pattern. |

n_pixels × pool_size

|

input (you give it to the package) |

| noise mask (a.k.a. “per-participant CI”) | one participant’s classification image: a single vector of pixel values, base-subtracted. Conceptually, the weighted average of the noise patterns they “selected” with their responses. |

n_pixels × 1 (one column) |

intermediate |

signal_matrix |

all participants’ noise masks stacked side by side,

one column per producer. This is the central object of

rcisignal. |

n_pixels × n_participants

|

output (you pass it to every rel_*,

run_reliability, run_discriminability

call) |

In sum:

-

noise_matrixdescribes the stimuli (what the experiment showed), and exists before any participant runs the task (see §4.3 for how to read it); - noise mask describes one participant’s CI, and exists after that participant has responded;

-

signal_matrixdescribes the whole sample’s CIs. It is the collection of all individual noise masks lined up by participant (this is the matrix every reliability and discriminability function inrcisignaluses).

You don’t build the noise mask or the signal_matrix by

hand: ci_from_responses_2ifc() and

ci_from_responses_briefrc() do it for you and return a list

whose $signal_matrix element is the matrix you pass to the

metrics in §7-§9.

A small terminology trap. The word mask above means image-shaped overlay (one number per pixel, defined over the whole image grid). It is not the same as a face-region mask (a logical 1/0 stencil that selects “eyes” or “mouth” pixels); those are covered separately in §4.5.

A note on the signal matrix name. Other RC papers sometimes

call this same per-producer object a noise matrix, because the

underlying pixel values are visual noise patterns. Both names are

reasonable: the data really do contain a mixture of noise (the per-trial

random patterns the experiment showed) and signal (the producer’s

sign-weighted aggregation of those patterns). The metrics in this

package are designed to test how much of that mixture is signal rather

than noise, hence signal matrix. To avoid the name collision,

rcisignal’s code reserves noise_matrix

strictly for the input pool above (the row of the table) and

signal_matrix strictly for the per-producer output (the

third row). Whatever you call the object in your own writing, the shape

and interpretation are the same.

Two paths lead to a signal matrix, with different consequences for the metrics that follow.

3.1 Two paths to the signal matrix

Mode 2: from raw trial-level responses

(recommended). Use ci_from_responses_2ifc() for

2IFC pipelines or ci_from_responses_briefrc() for Brief-RC.

Both return a list with $signal_matrix already in the right

shape, base-subtracted, and unscaled (i.e. carrying the raw mask). This

is the safe path for the reliability metrics later on.

res <- ci_from_responses_2ifc(

responses,

rdata_path = "data/rcicr_stimuli.Rdata",

baseimage = "base"

)

signal <- res$signal_matrixMode 1: from pre-rendered CI PNGs on disk. Use

read_cis() to read a directory of PNG/JPEG CIs, followed by

extract_signal() (or the read_signal_matrix()

shortcut that composes both). This path is offered for convenience and

carries a caveat: PNG pixels are necessarily what was rendered to disk

(base + scaling(mask)). After base subtraction, the

resulting signal is scaling(mask) rather than the raw

mask.

signal <- read_signal_matrix(

dir = "data/cis_condition_A/",

base_image_path = "data/base.jpg"

)3.2 Raw mask vs rendered CI

For correlation-based metrics (rel_split_half(),

rel_loo()), the rendered scaling is mostly harmless because

a single uniform linear stretch preserves Pearson correlation. For

variance-based metrics (rel_icc(),

pixel_t_test(), the cluster test, and the Euclidean half of

rel_dissimilarity()), scaling distorts the numbers. The

"matched" (per-CI) scaling option, where each producer’s

mask is stretched to the base’s dynamic range, breaks correlation-based

metrics as well.

rcisignal keeps track of which kind of matrix you have

by labelling each signal matrix with a source tag (either

"raw" or "rendered"). The variance-based

functions check this tag before they run, and stop with an informative

error if you pass a rendered matrix:

- Functions that build raw masks

(

ci_from_responses_2ifc(),ci_from_responses_briefrc()) label the resulting matrix as"raw". - Functions that read PNGs (

read_cis(),extract_signal(),read_signal_matrix()) label it as"rendered". - Variance-based metrics check this label internally and stop with an

informative error when given a

"rendered"matrix, unless you passacknowledge_scaling = TRUEto confirm you have read the caveat.

# This works:

rel_icc(res$signal_matrix)

# This errors with a clear message:

rel_icc(read_signal_matrix("cis/", "base.jpg"))

#> Error: signal_matrix is a rendered CI (PNG-derived); ...

# Override after reading the explanation:

rel_icc(read_signal_matrix("cis/", "base.jpg"),

acknowledge_scaling = TRUE)A safety check (looks_scaled()) also flags hand-built

signal matrices that don’t carry the source label but whose

value range looks rescaled. This check emits a once-per-session warning

rather than stopping the analysis; silence it with

options(rcisignal.silence_scaling_warning = TRUE).

One important exception: rcicr::computeInfoVal2IFC() is

unaffected by display scaling. It reads the raw $ci element

from the rcicr CI list internally

(norm(matrix(target_ci[["ci"]]), "f")) regardless of the

scaling argument used at generation, so the standard 2IFC

infoVal path is safe even when the displayed CIs are rendered.

Hand-rolled implementations (including

rcisignal::infoval(), which has to support Brief-RC where

no upstream function exists) require the raw mask explicitly.

4. Data preparation

This section covers the four objects the package consumes: trial-level responses, the noise matrix, a base image, and an optional face mask.

4.1 Response data

Trial-level data, one row per trial, in any tabular shape

(data.frame, data.table, tibble).

Required columns:

| Column | Type | Meaning |

|---|---|---|

participant_id |

char/int | producer identifier |

stimulus |

int | stimulus / pool id (range depends on method, see below) |

response |

+1 / -1

|

producer’s choice (see below) |

rt (optional) |

numeric | response time in ms (needed only for check_rt()) |

2IFC response coding

Each trial presents two faces drawn from a unique noise pair.

response = +1 if the producer picked the original variant

(base + noise), and -1 if they picked the

inverted variant (base - noise). A common silent failure in

2IFC pipelines is {0, 1} coding produced by experiment

software that records “left” / “right” as 0 / 1;

check_response_coding() flags this with a recode formula in

the suggestion text.

A 2IFC dataset with three participants and four trials each illustrates the format. On every trial the participant saw two stimuli (one original and one inverted noise pattern superimposed on the same base face) and chose one:

responses_2ifc <- data.frame(

participant_id = rep(c("P01", "P02", "P03"), each = 4),

stimulus = rep(1:4, times = 3),

response = c( 1, -1, 1, 1,

-1, 1, 1, -1,

1, 1, -1, 1),

rt = c(820, 910, 750, 880,

680, 1040, 720, 950,

900, 770, 990, 810)

)

responses_2ifc

#> participant_id stimulus response rt

#> 1 P01 1 1 820

#> 2 P01 2 -1 910

#> 3 P01 3 1 750

#> 4 P01 4 1 880

#> 5 P02 1 -1 680

#> 6 P02 2 1 1040

#> 7 P02 3 1 720

#> 8 P02 4 -1 950

#> 9 P03 1 1 900

#> 10 P03 2 1 770

#> 11 P03 3 -1 990

#> 12 P03 4 1 810The 2IFC stimulus column indexes the trial’s

stimulus pair, so its range is 1:n_trials. Every trial

has its own unique pair, so an id never repeats across trials within a

participant.

Brief-RC response coding (Schmitz et al. 2024)

Each trial presents 2k noisy faces (k

original images, base + noise_i, and k

inverted images, base - noise_i), drawn from k

distinct pool noise patterns. The producer picks one. The data records

one row per trial: stimulus = pool id of

the chosen noise pattern; response = +1 if original chosen,

-1 if inverted. Unselected faces are absent from the data;

do not pad them as zero rows. The same row format applies to both

validated Brief-RC variants (Brief-RC 12 with k = 6,

Brief-RC 20 with k = 10); the analysis pipeline is

identical (see §13.1 for the formula being symmetric in

k).

A Brief-RC 12 dataset with the same three participants and four trials each illustrates the format:

responses_briefrc <- data.frame(

participant_id = rep(c("P01", "P02", "P03"), each = 4),

stimulus = c( 47, 112, 8, 263,

91, 17, 204, 55,

188, 142, 261, 73),

response = c( 1, -1, 1, 1,

-1, 1, -1, 1,

1, 1, -1, -1),

rt = c(1100, 1340, 980, 1210,

890, 1450, 1020, 1130,

1280, 1190, 1360, 1080)

)

responses_briefrc

#> participant_id stimulus response rt

#> 1 P01 47 1 1100

#> 2 P01 112 -1 1340

#> 3 P01 8 1 980

#> 4 P01 263 1 1210

#> 5 P02 91 -1 890

#> 6 P02 17 1 1450

#> 7 P02 204 -1 1020

#> 8 P02 55 1 1130

#> 9 P03 188 1 1280

#> 10 P03 142 1 1190

#> 11 P03 261 -1 1360

#> 12 P03 73 -1 1080What pool_size means concretely

In Brief-RC the stimulus column ranges from

1 to pool_size, where pool_size

is the total number of distinct noise patterns generated for the

experiment, i.e., the number of columns in the

noise_matrix (§4.3). On every trial the software draws 6

distinct pool patterns and presents each in both original and inverted

form, giving 12 alternatives. Across many trials, the same pool id can

therefore re-appear (and a producer can pick the same pool id more than

once). The exact re-use rate depends on the experimenter’s sampling

design, of which three regimes are common.

-

Without replacement at the presentation level: the

only path open when

n_trials x stim_per_trial == pool_size. Each pool item is shown exactly once across the whole task. A producer cannot choose the same pool id twice. Schmitz et al.- Experiment 1 used this regime (60 trials x 12 alternatives = 720

presentations, exactly matching their

pool_size = 720).

- Experiment 1 used this regime (60 trials x 12 alternatives = 720

presentations, exactly matching their

-

With replacement at the presentation level:

required when

n_trials x stim_per_trial > pool_size. Pool items are drawn randomly with possible repetition. A producer can choose the same pool id on two different trials (with the same response sign or with opposite signs). Example: 300 trials x 12 alternatives = 3600 presentations drawn from a 1500-item pool. - Hybrid designs (partial blocks, Latin squares, counterbalanced subsets per condition). Treat as with-replacement at the analysis level unless your design guarantees no repetition.

rcisignal is agnostic to the regime. Internally, before

computing the per-producer mask, it collapses any duplicated

stimulus ids in a producer’s data using

mean(response) exactly as Schmitz’s genMask()

formulation does. So if the same pool item is chosen twice with the same

sign, it contributes once with full weight; if chosen twice with

opposite signs, the two cancel and it contributes zero. The

genMask() divisor is

length(unique(chosen_stimuli)), not

n_trials.

Structural differences between 2IFC and Brief-RC data

| Aspect | 2IFC | Brief-RC 12 |

|---|---|---|

| Alternatives shown per trial | 2 (one original + one inverted) | 12 (six original + six inverted, drawn from six pool patterns) |

| Rows recorded per trial | 1 | 1 |

What stimulus indexes |

The trial’s stimulus pair | The chosen pool item only |

Range of stimulus

|

1 to n_trials

|

1 to pool_size

|

| Same id can repeat across trials | No (each trial has its own pair) | Depends on the experimenter’s sampling design (see above) |

| Unchosen alternatives recorded | Not applicable (only two shown) | No (treated as absent; do not pad as zero rows) |

read_responses() is a small wrapper around

data.table::fread() that validates the required

columns:

responses <- read_responses("study1data.csv", method = "2ifc")4.2 The .RData from

rcicr::generateStimuli2IFC()

The 2IFC pipeline uses an .RData file produced by

rcicr::generateStimuli2IFC(). The objects in this file that

the analysis actually uses are:

-

base_faces: the loaded base-face matrices, grayscale in[0, 1]. List names (e.g."base") become thebaseimageargument used by later functions.base_face_filescarries the matching source paths. -

img_size: side length of the (square) image in pixels. -

p: the noise basis (with$patchesand$patchIdx), the sinusoidal dictionary used to assemble each trial’s noise. -

stimuli_params: a named list of matrices (one per base label) where each row carries one trial’s contrast weights. Reconstruct triali’s noise viarcicr::generateNoiseImage(stimuli_params[[base]][i, ], p).

n_trials, seed, label,

stimulus_path, trial,

generator_version, and use_same_parameters are

bookkeeping fields, not consumed by analysis.

reference_norms is created and inserted in place by

rcicr::computeInfoVal2IFC() on its first call; copy the

rdata first if you want it untouched.

The actual per-trial noise patterns are not stored

in the rdata. They are reconstructed on demand from

stimuli_params and p;

rcisignal::read_noise_matrix() does this automatically

(§4.3) and caches the result.

On macOS the file is saved with a lowercase .Rdata

extension; list.files(pattern = "\\.RData$") is

case-sensitive by default and will miss it. Use

ignore.case = TRUE when searching.

4.3 The noise matrix

The noise matrix is an n_pixels x pool_size numeric

matrix where each column is the noise pattern shown for one trial (or

pool item). It serves as input to CI computation, distinct from the

signal matrix, which is an output.

read_noise_matrix() is a single entry point that detects

the file format automatically. For formats that are slow to parse, it

saves a faster .rds copy next to the original and re-uses

it on subsequent calls:

# Plain text matrix (Schmitz et al. 2024 OSF format).

# First call parses + writes data/noise_matrix.rds.

nm <- read_noise_matrix("data/noise_matrix.txt")

# Second call loads from the cache (fast).

nm <- read_noise_matrix("data/noise_matrix.txt")

# rcicr .Rdata source: reconstructs each trial via

# rcicr::generateNoiseImage() and caches the result.

nm <- read_noise_matrix("data/rcicr_stimuli.Rdata",

baseimage = "base")The .rds is rebuilt automatically if you change the

source file (each cached file records the source’s size and modification

time, and is rebuilt when either changes). A once-per-session

cli line announces “cache built” or “cache reused”; silence

it with

options(rcisignal.silence_cache_messages = TRUE).

For the rcicr .Rdata reconstruction path, the upstream

rcicr package must be installed (it’s a Suggests).

Subsequent reads from the .rds cache do not need it.

validate_noise_matrix() runs basic sanity checks and

returns a diagnostic-style result rather than aborting:

validate_noise_matrix(nm,

expected_pixels = 256L * 256L,

expected_stimuli = 300L)4.4 The base image

The base face used at stimulus generation. Must be:

- Square (e.g. 256x256 or 512x512).

- Grayscale (single channel).

-

Pixel range

[0, 1](the conventionpng::readPNGandjpeg::readJPEGproduce). - Centred with eye/nose/mouth roughly at the geometry assumed by the default oval mask (eyes upper third, mouth lower third).

For a research-quality base, the webmorphR package by

DeBruine (2022) is the current best-in-class tool. The example below

uses R’s native pipe (|>, available since R 4.1) because

that is the idiom the webmorphR documentation uses; the rest of this

vignette sticks to base R for consistency.

library(webmorphR)

stim <- read_stim("path/to/raw_face_images/") |>

auto_delin() |> # automatic landmark delineation

align(procrustes = TRUE) |> # Procrustes alignment

crop(width = 0.85, height = 0.85) |> # tight crop

to_size(c(256, 256)) |> # rcicr-friendly size

greyscale() |>

avg() # morph into one average face

write_stim(stim, dir = "stimuli/", names = "base", format = "png")The output stimuli/base.png goes into

rcicr::generateStimuli2IFC(base_face_files = list(base = "stimuli/base.png")).

4.5 Face-region masks

rcisignal’s pixel-wise statistics aggregate or compare

across pixels, so the choice of which pixels enter the analysis

materially changes the reported number. A mask that includes hair and

background dilutes signal-localised effects roughly in proportion to the

area added.

Three ways to obtain a mask:

# 1. Parametric, no extra dependencies. Default oval geometry

# is a typical centred-face oval; tune via centre,

# half_width, half_height.

fm <- make_face_mask(c(256L, 256L), region = "full")

# Sub-regions for region-restricted analyses. Three of these

# (eyes, left_eye, right_eye) are axis-aligned rectangles

# tunable via the `region_bounds` argument; the rest are

# ellipses tunable via `centre`, `half_width`, `half_height`.

make_face_mask(c(256L, 256L), region = "eyes") # wide rectangle, both eyes

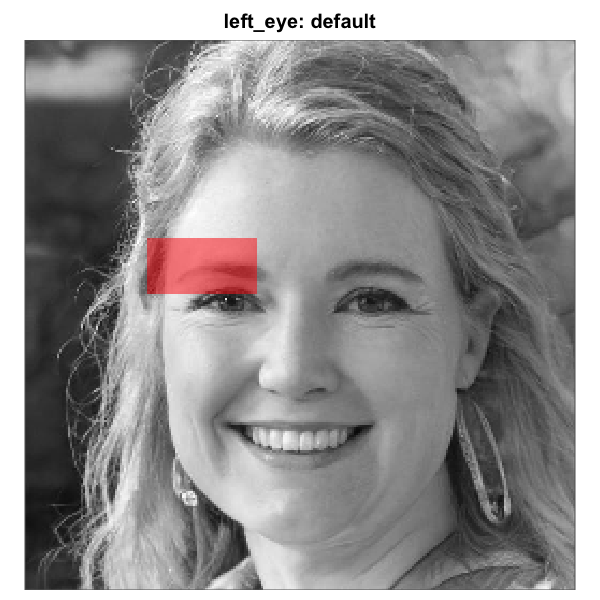

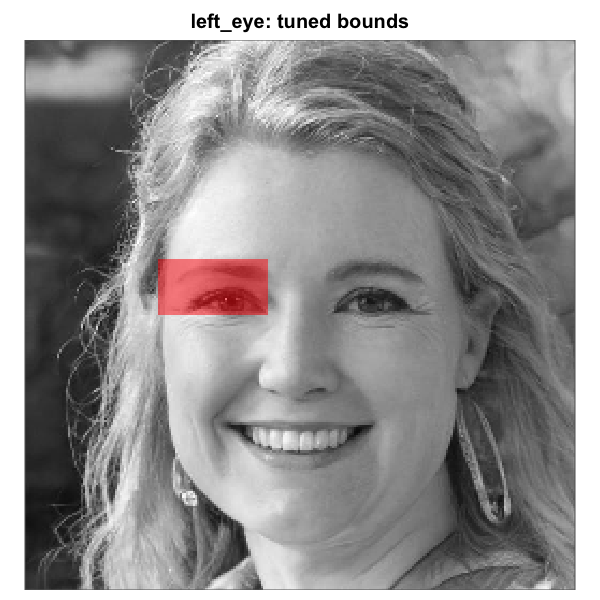

make_face_mask(c(256L, 256L), region = "left_eye") # rectangle, viewer's left eye

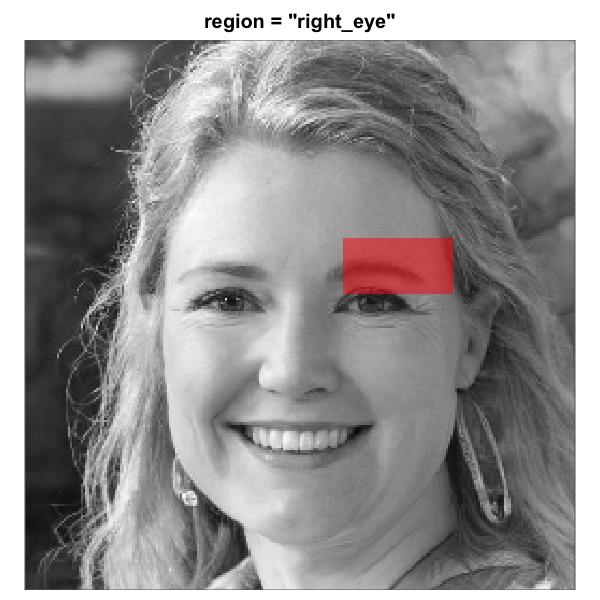

make_face_mask(c(256L, 256L), region = "right_eye") # rectangle, viewer's right eye

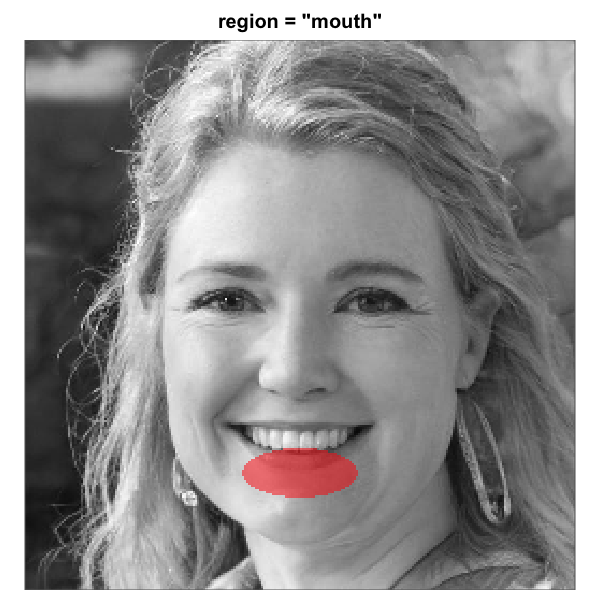

make_face_mask(c(256L, 256L), region = "mouth")

make_face_mask(c(256L, 256L), region = "nose")

make_face_mask(c(256L, 256L), region = "upper_face")

make_face_mask(c(256L, 256L), region = "lower_face")

# 2. From a hand-painted PNG / JPEG mask (e.g. from webmorphR or

# GIMP):

fm <- read_face_mask("masks/oval_256.png",

expected_dims = c(256L, 256L))

# 3. From a numeric matrix in code:

fm <- as.vector(custom_mask_matrix > 0.5)A mask can be supplied as either a logical vector of length

n_pixels (with pixels in the same order R uses when it

flattens a matrix into a vector, i.e. column by column) or as a logical

matrix with the image dimensions. Every mask argument in

the package accepts both forms.

plot_face_mask() renders any of those forms over the

base face, so you can verify alignment before passing the mask to a

metric:

plot_face_mask(fm, img_dims = c(256L, 256L),

base_image = "data/base.jpg",

main = "Full face oval (package default)")To overlay the mask directly on a specific base image (the workflow

you want when the question is “does this mask cover the right region of

this specific base image?”), use plot_mask_overlay():

# Either pass a prebuilt mask:

plot_mask_overlay(base_image = "data/base.jpg", mask = fm)

# Or use the `region =` shortcut to skip the make_face_mask()

# call. `region_bounds` is forwarded for rectangle-region tuning.

plot_mask_overlay(base_image = "data/base.jpg", region = "left_eye")Apply masks symmetrically. When a mask enters the

analysis, apply it to every term that goes into the statistic.

For infoval(), this means passing the mask to the function

so both the observed Frobenius norm and the reference distribution are

restricted to the same pixels. For rel_*() functions, pass

the mask via the mask argument; the package handles

symmetric application internally. Mixing a masked observed value with an

unmasked reference (or vice versa) yields a number that has no

defensible interpretation.

Visualising what a mask does to a base face

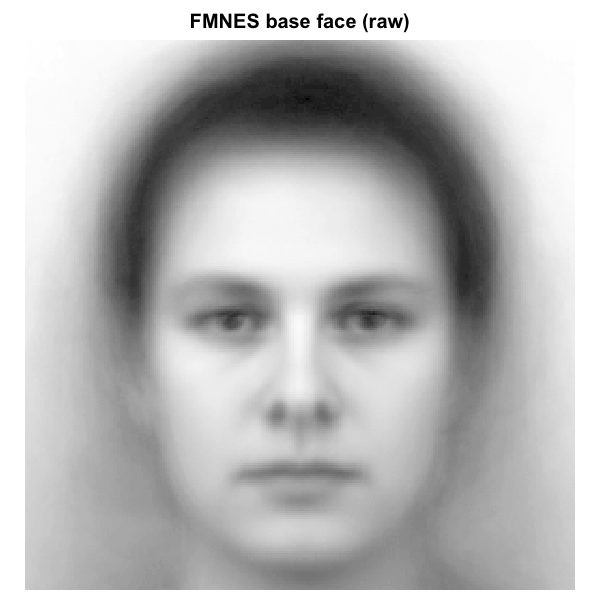

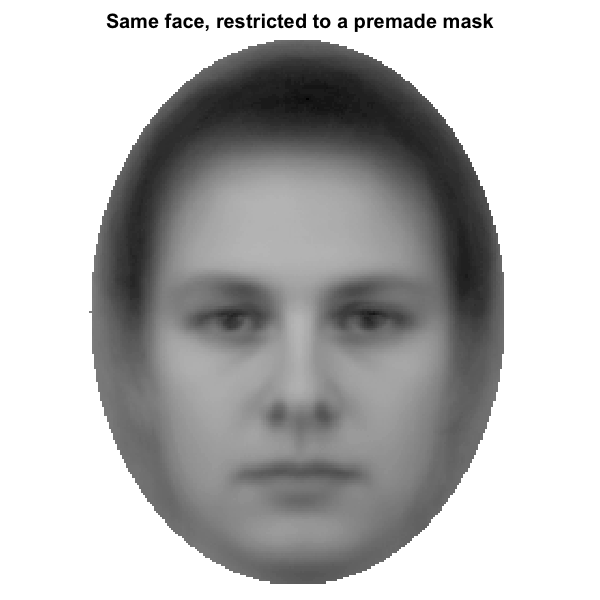

A mask is a logical vector that decides which pixels enter the analysis. Every pixel inside the mask contributes to the statistic; every pixel outside is ignored. Imposing a premade oval mask on the FMNES base face from the Karolinska Directed Emotional Faces stimuli (Lundqvist, Flykt, & Ohman, 1998), resized to 256 x 256, the visible difference is what is shown below.

Effect of a face-region mask on a base image. Left: raw FMNES base face

(Karolinska Directed Emotional Faces; Lundqvist et al. 1998). Right:

same face with a premade full-face oval mask applied; pixels outside the

mask are dimmed to light grey to make the analysed region explicit. The

reliability and discriminability metrics in this package will only see

the inside-mask pixels when a mask is supplied via the mask

argument.

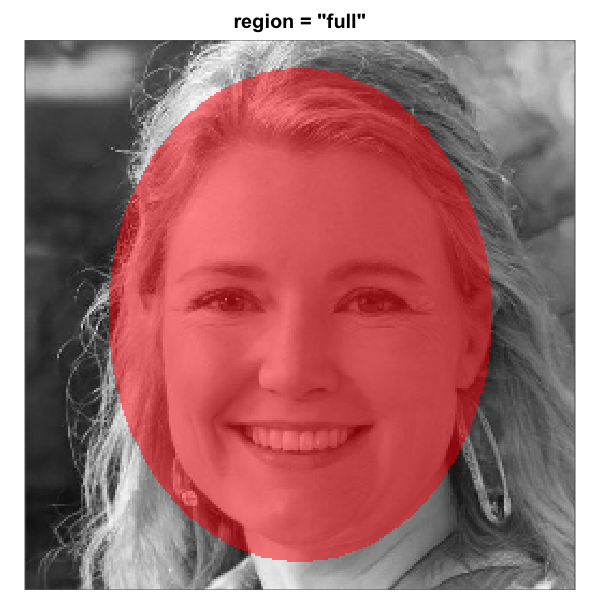

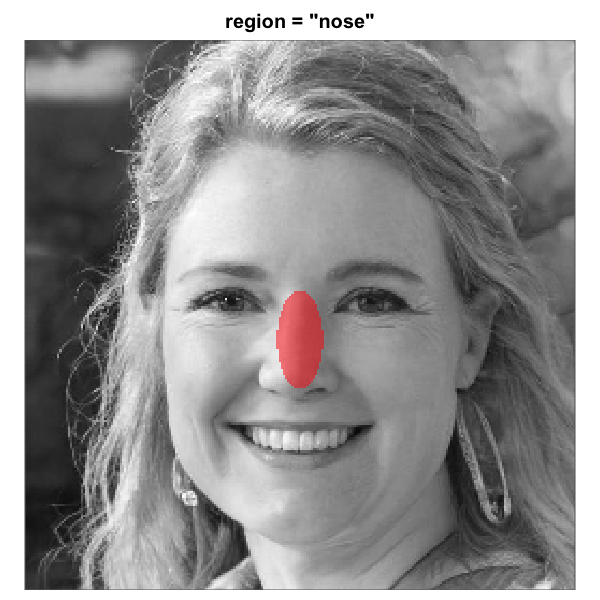

When make_face_mask() is used to generate the mask

parametrically, eight region presets are available. Imposed on the same

base face (an artificial face generated with thispersondoesnotexist.com

so no consent or licensing concerns apply), they look as follows. Five

regions are ellipses (full, nose,

mouth, upper_face, lower_face);

the three eye regions (eyes, left_eye,

right_eye) are axis-aligned rectangles, tunable to a

specific base via the region_bounds argument (see the

tuning subsection below). All eight region geometries are this package’s

heuristics for a centred-portrait base; they are not taken from any

specific published paper. The convention of applying a full-face oval

before pixel-wise metrics follows prior practice in social-face RC

(e.g., Oliveira et al., 2019; Ratner et al., 2014; Schmitz, Rougier,

& Yzerbyt, 2024).

The eight built-in face-region masks rendered over the same

artificial-person base face (256 x 256). Each translucent red overlay

marks the pixels that pass through the mask; pixels outside are excluded

from the analysis. All eight region geometries are this package’s

heuristics for a centred-portrait base. The three rectangle eye regions

are independent of the full-oval geometry and tunable via

region_bounds.

The default geometry assumes the eyes sit roughly in the upper third

of the image and the mouth in the lower third (centred square base, face

filling most of the frame). Pass centre,

half_width, and half_height to

make_face_mask() if your base image has different

framing.

Tuning a sub-region for a non-default base face

The default sub-region geometry is calibrated for a centred, frontal

base face that fills most of the frame. The elliptical regions are

positioned relative to the full-face oval (centre,

half_width, half_height); the rectangle eye

regions are independent of the oval and tuned via their own

region_bounds. When the base image violates the

centred-portrait assumption, the parametric overlay drifts off the

intended feature and the metrics computed against it no longer mean what

their name implies.

There are two tuning routes, depending on the region’s shape:

-

Rectangle regions (

"eyes","left_eye","right_eye") take aregion_bounds = c(row_min, row_max, col_min, col_max)argument that sets the rectangle’s edges directly in 0-1 image fractions. Independent of the full-oval geometry; the rectangle for either eye can move without dragging the other along, which is the friction the previous two-ellipses-that-moved-together design ran into. -

Elliptical regions (

"full","nose","mouth","upper_face","lower_face") are positioned relative to the full-face oval and tuned via the globalcentre,half_width,half_height. For independent per-region adjustment of an ellipse (a common need with non-portrait or AI-generated bases), the exportedshift_mask()helper slides the mask by a number of pixels in any direction.

Rectangle regions: tune region_bounds

region_bounds accepts a length-4 numeric vector

c(row_min, row_max, col_min, col_max) in 0-1 image

fractions. Each pair must satisfy row_min < row_max and

col_min < col_max, and every entry must lie in

[0, 1]. The left and right eye rectangles are independent,

so each can be nudged separately to match a specific base.

# Tune just the viewer's left-eye rectangle on a base whose

# eye line sits a few percent below the heuristic default. The

# right-eye rectangle is unaffected.

left_eye_tuned <- make_face_mask(

c(256L, 256L), region = "left_eye",

region_bounds = c(0.40, 0.50, 0.24, 0.44)

)

# Verify the alignment visually before passing to a metric.

# plot_mask_overlay() also accepts a `region =` shortcut that

# builds the mask internally; pass region_bounds the same way.

plot_mask_overlay(base_image = "data/base.png",

region = "left_eye",

region_bounds = c(0.40, 0.50, 0.24, 0.44))If you measured the rectangle’s edges in pixels (by zooming into the

base image in your viewer or plot() window), use

region_bounds_from_pixels() to convert to the 0-1 fractions

region_bounds expects:

# "The viewer's left eye sits in rows 100-130, cols 60-115 on

# this 256-pixel base." Convert once, pass straight through.

bounds <- region_bounds_from_pixels(

row_min = 100, row_max = 130,

col_min = 60, col_max = 115,

img_dims = c(256L, 256L)

)

make_face_mask(c(256L, 256L), region = "left_eye",

region_bounds = bounds)Rendered over the artificial-person base used earlier, the default

left_eye rectangle and the tuned variant look as

follows:

Rectangle left_eye mask before and after tuning on a base

face whose eye line sits below the default. Left: default

region_bounds, sitting on the eyebrow. Right: nudged

downward by passing

region_bounds = c(0.40, 0.50, 0.24, 0.44). Because the

rectangle eye regions are independent of the full-oval geometry, the

right-eye rectangle would remain untouched.

Elliptical regions: global centre or per-region

shift

If every feature is offset in the same direction, pass the global

centre (and optionally half_width,

half_height) to make_face_mask():

# Whole-face shift: nose, mouth, and the full oval all move

# together. Rectangle eye regions are unaffected.

make_face_mask(c(256L, 256L), region = "mouth",

centre = c(0.55, 0.50)) # 5% downFor independent per-region tuning of an ellipse, the exported

shift_mask() helper slides the mask by a number of pixels

in any direction. Pixels shifted off the image are dropped, and the

newly exposed edge is filled with FALSE.

# Default mouth mask, reshaped from a flat logical vector

# (pixels in column-by-column order) back into a 256 x 256

# grid.

mouth_mask_default <- matrix(

make_face_mask(c(256L, 256L), region = "mouth"),

nrow = 256, ncol = 256

)

# Tune. On a 256-pixel image, 20 pixels is about 8 % of the

# height and 8 pixels is about 3 % of the width.

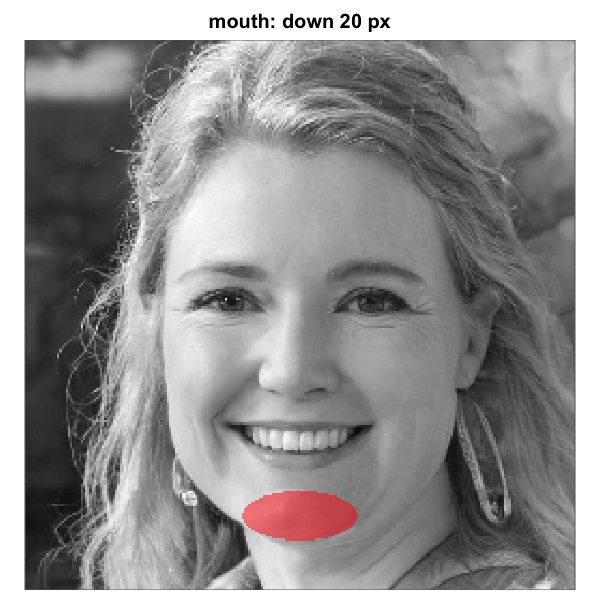

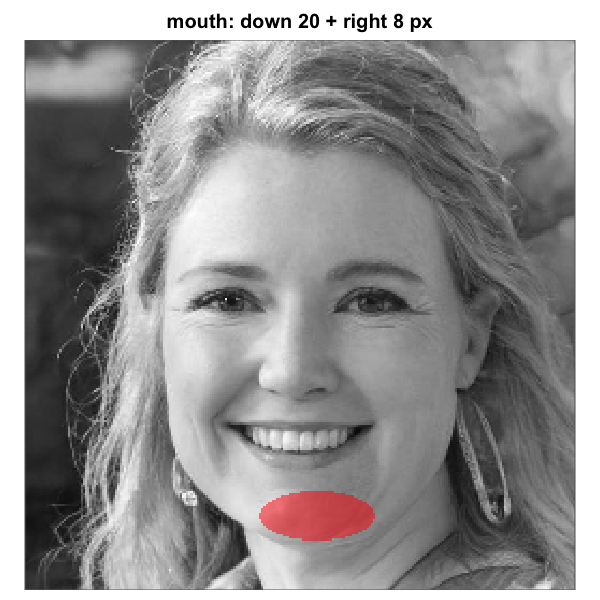

mouth_mask_v <- shift_mask(mouth_mask_default, down = 20)

mouth_mask_vh <- shift_mask(mouth_mask_default, down = 20, right = 8)shift_mask() accepts both down and

right and combines them in a single call, so vertical-only

and vertical-plus-horizontal tuning share the same idiom. It works on

either a column-major logical vector (pass img_dims) or a

logical matrix (returned in the same shape). Both infoval()

and the rel_*() family accept a logical matrix as the

mask argument, so the tuned grid can be passed in directly

without flattening.

Rendered over the same artif_base.png shown earlier

(where the mouth sits noticeably below the default), the default mask

and the two tuned variants look as follows:

Elliptical mouth-region mask before and after shift-tuning on a base

face whose mouth sits below the default. Left: default geometry. Middle:

shifted down by ~20 pixels (about 8 percent of image height). Right:

same vertical shift plus an ~8-pixel rightward shift. Each panel renders

one of the matrices produced by shift_mask() above. The

same recipe works for nose, upper_face,

lower_face, and the full oval; the three

rectangle eye regions use region_bounds instead.

Iterate with plot_mask_overlay() (overlay on the base

image) or plot_face_mask() until the overlay sits where you

want, then pass the tuned mask to infoval() or any

rel_*() function exactly as you would a parametric mask.

Useful shift magnitudes are typically a few pixels to a few dozen on a

256-pixel image; if you find yourself needing more than that, the whole

face is probably misaligned and centre should be retuned at

the global level via make_face_mask() instead.

5. Step 1: diagnose the inputs

Before computing CIs, run the diagnostic battery. The single entry

point is run_diagnostics(), which invokes every implemented

check whose required inputs are available and gathers the results into

one printable report.

5.1 A first run

The smallest meaningful call needs only the response data and the method:

report <- run_diagnostics(responses, method = "2ifc")

reportThe output looks like:

== Data-quality report (2ifc) ==

[PASS] Response coding

All 60,000 responses coded {-1, 1}.

[PASS] Trial counts

All 200 producers at 300 trials.

[PASS] Duplicates

No duplicate rows.

[PASS] Response bias

No constant responders, no |mean| > 0.6.

Summary: pass=4, warn=0, fail=0, skip=0

Skipped checks:

- check_rt (no col_rt)

- check_stimulus_alignment (no rdata or noise_matrix)

- check_version_compat (no rdata)

- compute_infoval_summary (no rdata + infoval_iter)

- check_response_inversion (no rdata + infoval_iter)

- check_rt_infoval_consistency (no rdata + infoval_iter + col_rt)The “Skipped checks” block is informational, not a failure: each listed check has prerequisites the call did not supply. The next section walks through how to unlock each.

5.2 The result object

run_diagnostics() returns an

rcisignal_diag_report with three fields:

-

$results: a named list ofrcisignal_diag_resultobjects, one per check that ran. -

$skipped_checks: character vector naming checks that were not run, each with the reason in parentheses. -

$method:"2ifc"or"briefrc".

Each rcisignal_diag_result has:

-

$status: one of"pass","warn","fail", or"skip". -

$label: short check name. -

$detail: character vector of explanation lines. -

$data: optional list of programmatic data (flagged participants, count tables, group-level statistics).

summary(report) returns a flat data frame with

check, status, label columns for

programmatic filtering. print() is the human-readable view

shown above.

5.3 The check_* family

Eight individual check functions cover the input-side battery. Each

takes responses plus its check-specific arguments and

returns an rcisignal_diag_result.

-

check_response_coding()verifies{-1, +1}coding. PASS for{-1, 1}; WARN with a recode formula for{0, 1}or{1, 2}; FAIL otherwise. The{0, 1}miscoding produced by experiment software that records “left” / “right” as 0 / 1 is a common silent failure in 2IFC. -

check_trial_counts(expected_n = ...)verifies that every producer has the expected number of trials.expected_ncan be a scalar or a named vector. PASS if all match; WARN at <= 10% off; FAIL above. -

check_duplicates()flags duplicate rows. PASS at 0; FAIL if >= 2 full duplicates and > 5% of rows; WARN otherwise. -

check_response_bias(bias_threshold = 0.6)flags constant responders (FAIL) and producers with|mean(response)| > bias_threshold(WARN; default 0.6 corresponds to roughly an 80/20 split). -

check_rt(col_rt = ...)scans response times for fast-clicking (default RT < 400 ms), implausibly slow trials, and low within-subject coefficient of variation. Defaults are conservative; tune them to your task. -

check_stimulus_alignment(rdata = ... | noise_matrix = ...)validates thatstimulusids fall inside the pool. FAIL on any out-of-range id; WARN if > 50% of the pool is unreferenced. -

check_version_compat(rdata = ...)(2IFC only) compares thegenerator_versionrecorded in the rdata to the installedrcicrversion. PASS if matching; WARN otherwise. The warning is informational (older datasets remain usable, and the flag simply prompts a spot-check). -

check_response_inversion(rdata = ..., infoval_iter = ...)detects whole-batch sign-flipped data by computing per-producer infoVal with the original responses and again with the negated responses. FAIL if >= 50% of producers are flagged as inverted; WARN if any are.

5.4 diagnose_infoval()

diagnose_infoval() is the recommended diagnostic for the

question “is my data informative at all?”. It runs a six-step

walk-through that catches every common low-infoVal cause:

- Compute observed Frobenius norm per producer (and group-mean).

- Compare against a reference distribution at each producer’s actual

trial count (closes the calibration gap in

rcicr::generateReferenceDistribution2IFC(), which keys on pool size). - Apply a face mask (default

"auto"= parametric full-face oval) and repeat. - Compare unmasked vs masked z to see whether masking lifts or depresses signal.

- Sanity-check with a synthetic random responder (should land at

|z| < 1). - Report whether the group-mean CI clears z = 1.96 even when per-producer medians do not.

iv <- diagnose_infoval(

responses,

method = "2ifc",

rdata = "rcic_stimuli.Rdata",

iter = 1000L,

face_mask = "auto",

seed = 1L

)

iv # PASS / WARN / FAIL with rich data attached to $dataThe status logic:

-

PASS: group-mean masked z >= 1.96 and

random-responder z is within

|z| < 1. Data is healthy. -

FAIL: random-responder

|z| > 2. Reference distribution is miscalibrated; almost always indicates a noise-matrix or pool-id mismatch. - WARN: anything in between. Usually means the per-producer signal is genuinely modest but the group CI is informative (typical pattern for trait inferences).

5.5 compute_infoval_summary()

A small convenience function around

rcicr::computeInfoVal2IFC() for the legacy 2IFC path. It

returns a per-participant z table plus a pass/warn summary, useful for

direct comparison with previously published rcicr numbers.

For the Brief-RC path or for the trial-count-matched reference, prefer

diagnose_infoval() or infoval() directly.

5.6 check_rt_infoval_consistency()

Cross-validates infoVal against RT quality by correlating per-producer infoVal with per-producer median RT. A strong negative correlation (correlation <= -0.30) suggests that fast clickers are also producing low-infoVal masks, indicating a population-level pattern rather than a single-producer fluke. WARN if the correlation passes the threshold; PASS otherwise.

5.7 Conditional checks and required arguments

When the call carries only response data, four checks run and six are skipped. Each skipped check requires a specific additional argument:

| Check | Required argument |

|---|---|

check_rt |

col_rt |

check_stimulus_alignment |

rdata (2IFC) or noise_matrix

(Brief-RC) |

check_version_compat |

rdata (2IFC only) |

compute_infoval_summary |

rdata + infoval_iter

|

check_response_inversion |

rdata + infoval_iter

|

check_rt_infoval_consistency |

rdata + infoval_iter +

col_rt

|

infoval_iter defaults to NULL because the

reference distribution simulation at 10,000 iterations takes minutes on

first call. Opt in explicitly when you are ready to wait.

report <- run_diagnostics(

responses,

method = "2ifc",

rdata = "rcic_stimuli.Rdata",

baseimage = "base",

col_rt = "rt",

expected_n = 300L,

infoval_iter = 1000L,

face_mask = "auto"

)With every input supplied, the “Skipped checks” block is empty.

6. Step 2: compute classification images

Once the diagnostics pass, compute CIs.

6.1 From raw responses

The 2IFC path delegates to rcicr::batchGenerateCI2IFC()

and returns a list with $signal_matrix (raw mask, ready for

rel_*), optionally $rendered_ci for

visualisation, plus metadata.

res <- ci_from_responses_2ifc(

responses,

rdata_path = "rcic_stimuli.Rdata",

baseimage = "base",

scaling = "none", # raw mask only; render later if needed

keep_rendered = FALSE

)

dim(res$signal_matrix) # n_pixels x n_participants

attr(res$signal_matrix, "source") # "raw"Behind the scenes the function takes care of the steps that are easy

to get wrong when calling rcicr directly: it loads the helper packages

rcicr expects (foreach, tibble,

dplyr), checks that responses are coded

{-1, +1}, runs single-threaded by default, and matches the

.Rdata filename in a case- insensitive way.

Brief-RC support is built into rcisignal directly (rcicr v1.0.1 does

not include Brief-RC). The implementation follows Schmitz’s

genMask() formula step for step, including the rule that

collapses repeated stimulus ids by averaging their responses:

res <- ci_from_responses_briefrc(

responses,

rdata_path = "rcic_stimuli.Rdata", # for the noise pool

base_image = "base.jpg", # path or numeric matrix in [0, 1]

method = "briefrc12"

)You can pass a pre-loaded noise_matrix instead of

rdata_path; useful when you have a non-rcicr-generated pool

(e.g. Schmitz’s OSF text matrix).

6.2 From pre-rendered CIs

When you already have one CI image per producer on disk (PNG or

JPEG), read_signal_matrix() reads them and subtracts the

base image in one call:

signal <- read_signal_matrix(

dir = "data/cis_condition_A/",

base_image_path = "data/base.jpg"

)

attr(signal, "source") # "rendered"read_cis() and extract_signal() are also

available on their own, for cases where you want to do something between

reading the PNGs and subtracting the base (e.g. masking, cropping, or

swapping the base image).

The first call to any Mode-1 reader emits the once-per-session

warning that PNG-derived signals are scaled. Silence with

options(rcisignal.silence_scaling_warning = TRUE) or pass

acknowledge_scaling = TRUE when calling.

6.3 CI scaling options

rcicr::batchGenerateCI2IFC() exposes a

scaling argument with five values:

-

"autoscale": stretches each producer’s mask to a fixed symmetric range. The rcicr default and the convention used in Schmitz et al. (2024) Experiment 2. -

"matched": stretches each mask to the base image’s range. Per-CI, so it breaks correlation-based metrics as well (a uniform scaling preserves Pearson, but a per-CI stretch does not). -

"independent": likeautoscalewith each CI’s stretch computed independently (no shared range across CIs). -

"constant": multiplies the mask by a fixed constant. -

"none": no scaling. Output isbase + raw_mask.

The shipped $signal_matrix is the raw unscaled mask

regardless of which scaling you pick; the

scaling argument only affects the optional

$rendered_ci field that keep_rendered = TRUE

returns.

Recommendation: feed the raw $signal_matrix to every

metric. For rcicr::computeInfoVal2IFC() the choice does not

matter (it reads $ci internally). For Brief-RC, treat any

non-none scaling as visualisation-only and never pass it to

rel_* or to hand-rolled infoVal.

7. Step 3: within-condition reliability

With the signal matrix in hand, the question is whether each condition’s group-level CI is stable: would you obtain the same group pattern from a different half of the producers? “Reliable” in the psychometric sense is shorthand for the producers’ CIs agree with each other enough that averaging them recovers the same pattern in repeated samples. Two complementary metrics address this question directly, alongside an influence-screening diagnostic that is sometimes confused with reliability.

The two reliability metrics:

-

rel_split_half()asks how well one random half of the producers reproduces the other half’s group CI. Repeating the split many times gives a sampling distribution for the agreement. -

rel_icc()asks how much of the pixel-by-producer signal variance is attributable to consistent producer-level patterns versus residual noise. It is the same intraclass correlation used in measurement theory and inter-rater reliability work.

The third metric (rel_loo()) is an influence screen: it

flags individual producers whose removal noticeably shifts the group CI,

useful for catching coding errors or outlier strategies but not itself a

reliability number.

A short note on what these metrics do not address. Reliability here is internal: would the same producers, if split differently, have produced the same CI? Whether the CI accurately captures the producer’s mental representation of the trait is a separate validity question, typically addressed by an external rater study, and sits outside the package.

7.1 rel_split_half()

Background. Split-half reliability is an old psychometric

trick (Spearman, 1910; Brown, 1910): if a measurement is internally

consistent, splitting it into two halves and correlating the halves

should give a high correlation. In the RC setting, the “halves” are two

random subsets of producers, and the quantities being correlated are the

pixels of the group-mean CI computed from each half. The catch is that

each half is built from N/2 producers rather than

N, so the half-half correlation underestimates the

reliability of the full N-producer CI. The Spearman-Brown correction

r_sb = (2 r_hh) / (1 + r_hh) projects the half-half

correlation up to the reliability the full sample would have if the

underlying signal really is shared. Repeating the split many times and

averaging both quantities reduces the dependence on any single random

partition.

In code, the function does exactly this:

Randomly partition the producers into two halves, compute the

group-level CI for each half (rowMeans()), correlate them,

and average across many permutations. The function reports both the mean

per-permutation r (r_hh) and the

Spearman-Brown projected full-sample reliability

(r_sb = (2 r_hh) / (1 + r_hh)). The headline number is

typically r_sb.

sh <- rel_split_half(signal_matrix,

n_permutations = 2000L,

seed = 1L)

sh

plot(sh)Permutation is over producers (not pixels) so that each producer’s

spatial structure is preserved. For odd N, one randomly-chosen producer

is dropped per permutation (re-drawn each iteration) so both halves

contain floor(N/2) producers.

The null argument adds an empirical chance baseline:

-

null = "permutation": per iteration, generates fresh Gaussian noise per producer (no shared spatial structure), then recomputesr_hh. Centred at 0 and useful as a worst-case floor. -

null = "random_responders": simulatesncol(signal_matrix)random responders using the samegenMask()machinery asinfoval()’s reference. This baseline preserves the pixel correlation structure of real noise patterns and tracks the empirical chance baseline of an actual RC experiment more closely. Requiresnoise_matrix.

sh <- rel_split_half(signal_matrix,

null = "random_responders",

noise_matrix = nm,

n_permutations = 2000L,

seed = 1L)

sh$r_hh # observed

sh$r_hh_null_p95 # 95th percentile of the null

sh$r_hh_excess # observed - null median

sh$r_sb_excess # same, projected via Spearman-BrownReport $r_sb as the headline; $r_sb_excess

as the above-chance increment when a null is requested.

$ci_95 / $ci_95_sb are percentile 95% CIs on

the observed distribution.

rel_split_half_null() exposes the same null-distribution

simulation as a standalone function, useful when you want to precompute

a null and reuse it across conditions with the same producer count.

7.2 rel_icc()

Background. The intraclass correlation coefficient (ICC) is a family of statistics for asking how much of the variability in repeated measurements is attributable to differences between the objects of measurement (here, producers) versus residual noise (McGraw & Wong, 1996; Koo & Li, 2016). Every producer has one CI vector with one entry per pixel, and the ICC asks how consistently the producers agree on that pixel-by-pixel pattern. A high ICC means producers’ CIs are similar to each other relative to noise; a low ICC means they are not.

The “(3,*)” label is McGraw & Wong’s notation for a two-way

mixed-effects model in which the column factor (pixels in our case) is

fixed and the row factor (producers) is random. The fixed-pixels choice

reflects the reality of an RC experiment: the image grid is not a random

sample from a population of pixels; it is the same set of pixels across

all producers. The “3,1 vs 3,k” distinction is whether you want the

reliability of a single producer’s CI (3,1) or of the

group-averaged CI across k producers

(3,k).

rel_icc() returns both, computed from a two-way mixed

model with pixels fixed and producers random:

- ICC(3,1) answers “how informative is one producer’s CI as a noisy estimate of the group pattern?”.

-

ICC(3,k) answers “how stable is the group-mean CI

across

kproducers?”. Usually the headline.

ic <- rel_icc(signal_matrix)

ic # prints ICC(3,1), ICC(3,k), MS rows / cols / errorThe function computes both quantities directly from ANOVA mean

squares, which scales to large image grids that would otherwise run out

of memory. Results agree with psych::ICC() on smaller

matrices where both can be run.

ICC(3,) is appropriate when pixels are fixed. ICC(2,)

(two-way random) treats pixels as a random sample from a pixel

population, which the image grid is not, even when ICC(2,) and

ICC(3,) give similar numbers at high pixel counts. Use

variants = c("3_1", "3_k", "2_1", "2_k") to report ICC(2,*)

side-by-side when comparability with reports that use the two-way-random

model is needed.

ICC is variance-based, so it errors on a "rendered"

source matrix unless acknowledge_scaling = TRUE is passed.

Rendered scaling corrupts ICC values in non-recoverable ways, so the

default behaviour is conservative.

A once-per-session warning fires when

n_targets > 50,000 and ICC(3,k) is requested, flagging

that ICC(3,k) tends toward 1 at large image sizes (it is not

resolution-comparable). Report ICC(3,1) as the primary statistic for

cross-resolution comparisons.

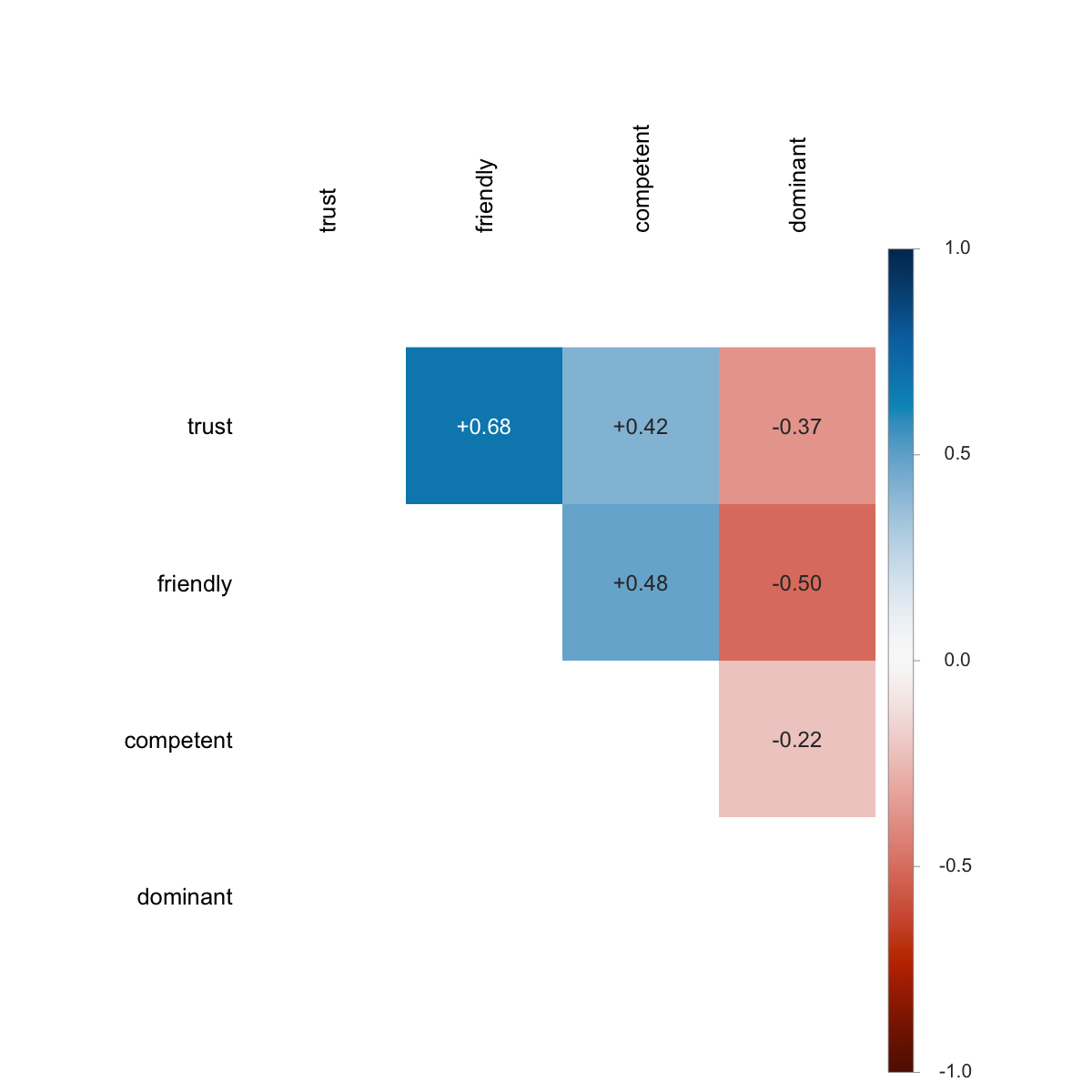

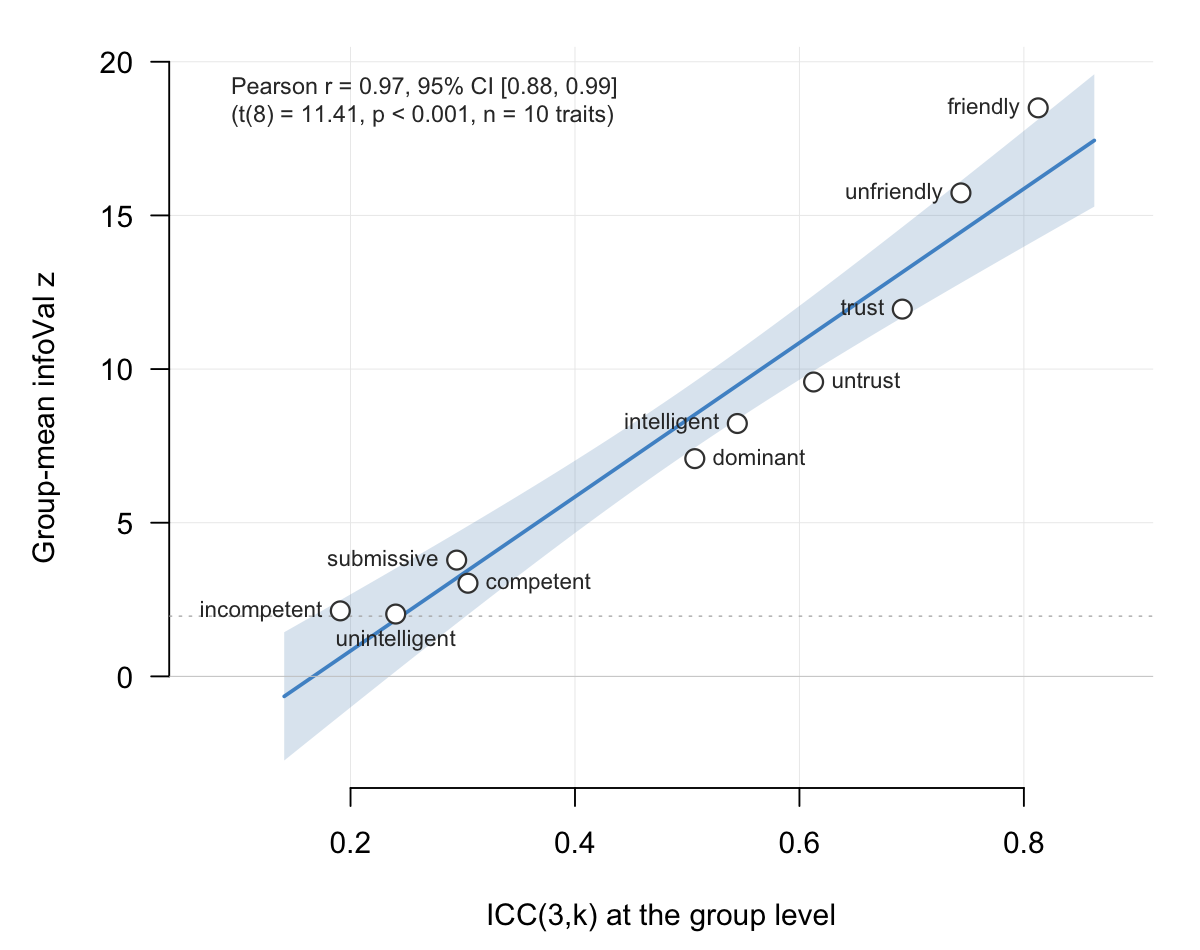

Empirically, ICC(3,k) and the group-mean infoVal z (see §9) track each other very closely on real data: both quantify how aligned the producers are on the pixel-level signal, ICC(3,k) as a variance ratio and group-mean z as the magnitude of the surviving group-mean signal against a matched reference. §12.6 shows the empirical relationship across ten trait conditions on the Oliveira et al. (2019) data (Pearson r ≈ 0.97).

7.3 rel_loo()

Background. Leave-one-out (LOO) is a jackknife-style

influence diagnostic (Tukey, 1958; Cook, 1977): for each producer in

turn, recompute the group CI with that producer removed and correlate

the result with the full-sample group CI. Producers whose removal

changes the group CI more than the others stand out as high-influence

cases worth a closer look. This is not a reliability

metric. The with-producer and without-producer group CIs share

(N-1)/N of their data, so LOO correlations are near 1 by

construction even on noisy data; the diagnostic information lives in the

relative ordering of producers, which the function summarises

as a robust z-score.

For each producer i, this function computes the Pearson

correlation between the full-sample group CI and the group CI recomputed

without producer i. Producers whose r_loo sits

well below the others are candidates for inspection.

lo <- rel_loo(signal_matrix)

lo # raw cors + z-scores + flag column

rel_loo_z(lo) # tidy data frame, sorted by z_score

plot(lo)rel_loo() is an influence-screening diagnostic, distinct

from the reliability metrics in §7.1 and §7.2. Because the full-sample

mean and the leave-one-out mean share (N-1)/N of their

data, r_loo values are near 1 by construction even on noisy

data (typically in the [0.95, 0.999] range at N = 30). The

relative ordering across producers carries the diagnostic information,

so the function reports $z_scores as the recommended

quantity.

Two flagging rules are available: "mad" (default) and

"sd" (kept for compatibility with earlier versions; will be

removed in v0.2.0). MAD is robust to the influential producers the test

is meant to flag; SD’s mean and standard deviation are themselves pulled

by the outlier. Default flag_threshold = 2.5 so that a

30-producer dataset flags ~0.3 producers by chance.

A flag prompts inspection rather than exclusion. Investigate first

(response coding, fatigue, atypical strategy) and cross-check with

run_diagnostics() to rule out coding errors before

excluding any producer.

7.4 run_reliability()

Top-level convenience function that runs

rel_split_half() and rel_icc() on a single

signal matrix and bundles both results into one

rcisignal_rel_report:

rep <- run_reliability(signal_matrix,

n_permutations = 2000L,

seed = 1L)

rep

plot(rep)rep$results$split_half and rep$results$icc

are the standalone result objects. run_reliability()

deliberately omits rel_loo(), since LOO is an

influence-screening diagnostic and bundling it into a reliability report

invites misreading r_loo’s near-1 values as

reliability.

8. Step 4: between-condition discriminability

When the design has two or more conditions, the question becomes whether their group CIs are distinguishable, both in overall magnitude and in spatial location.

The hard part of doing this honestly is the multiple comparisons

problem. A typical RC image has tens of thousands of pixels (a 256

x 256 grid has 65,536). If you compute one t-test per pixel and

threshold each at the conventional p < 0.05, you should

expect about 5% of pixels to come out “significant” by chance even when

the two conditions are identical, which is over 3,000 false-positive

pixels per image. The naive Bonferroni correction (divide alpha by the

number of tests) overcorrects in the opposite direction because pixels

are spatially correlated; CI signal lives in contiguous regions, not

independent dots.

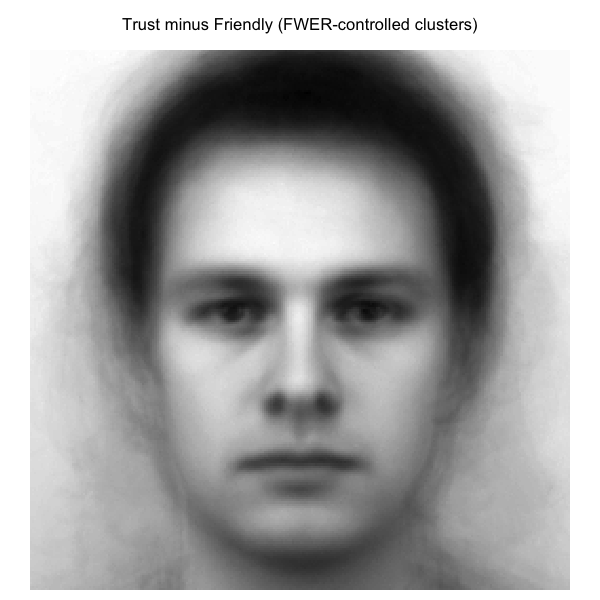

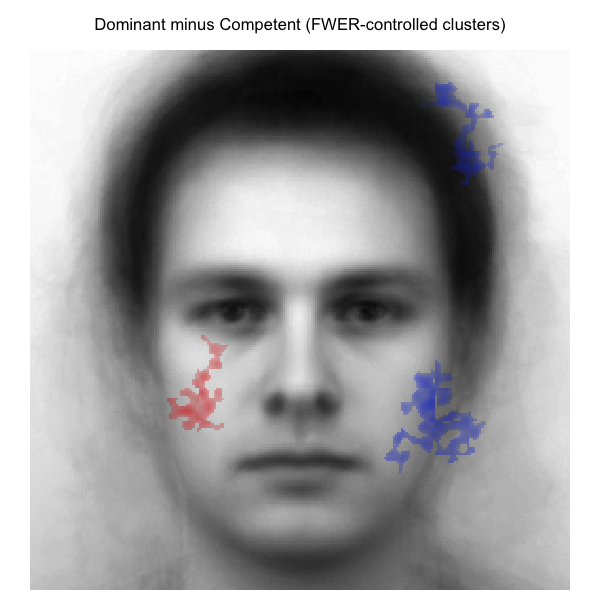

The solution adopted here is borrowed from the neuroimaging literature: build a single test statistic that summarises the spatial extent of the effect (a cluster), and calibrate it against a null distribution generated by random label permutation. This controls the family-wise error rate (FWER) in the strong sense, that is, the probability of one or more false positives anywhere in the image is held below the chosen alpha (Nichols & Holmes, 2002; Maris & Oostenveld, 2007). Two test statistics are offered:

- A classical cluster-mass statistic with a user-set cluster- forming

threshold (

rel_cluster_test(method = "threshold")). - Threshold-free cluster enhancement, TFCE

(

rel_cluster_test(method = "tfce"); Smith & Nichols, 2009), which sidesteps the threshold choice by integrating the cluster contribution to each pixel across many thresholds.

A complementary question is the overall magnitude of the

difference, independent of where in the image it sits.

rel_dissimilarity() addresses that with a single distance

number plus a bootstrap confidence interval.

8.1 pixel_t_test()

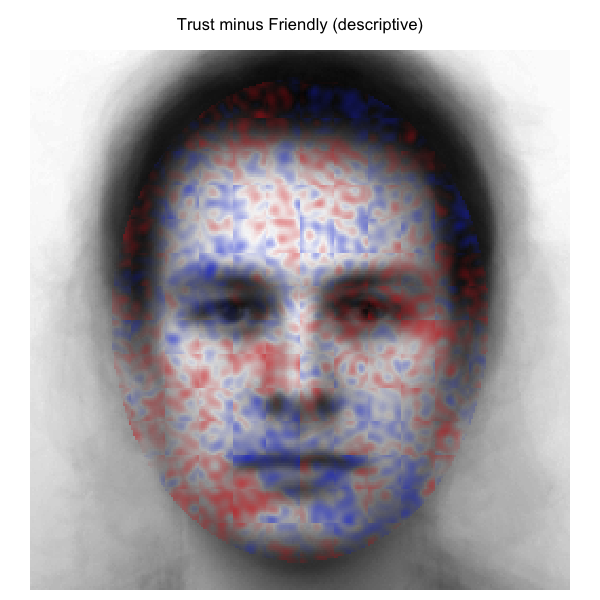

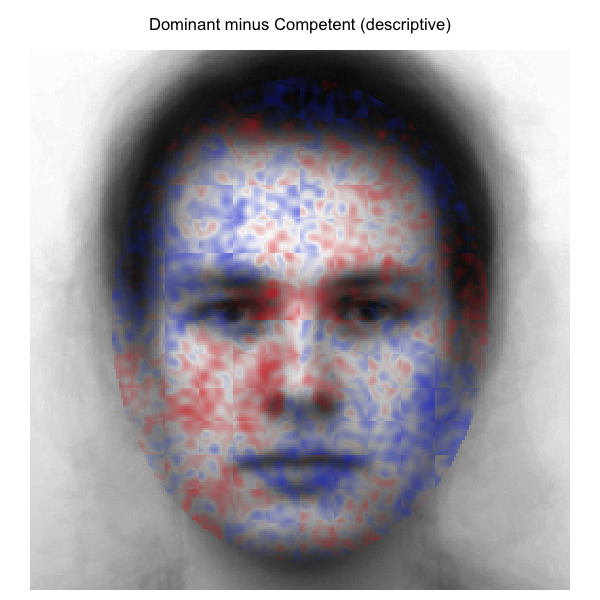

Background. The pixel-wise t-test is the building block of everything in this section: at each pixel, compare the two conditions’ producer-level signal values with a Welch’s t (or a paired t when producers are matched). The output is one t-value per pixel, an image-shaped statistical map of where the conditions differ.

This map is informative, but it is not a valid statistical test on

its own. With tens of thousands of pixels and no multiplicity

correction, raw per-pixel p-values overstate the strength of evidence

(see the §8 intro above). For that reason pixel_t_test()

returns the t-vector and stops there; turning it into inference is the

job of rel_cluster_test() (§8.2), which feeds these

per-pixel t-values into a permutation-based FWER procedure.

In code:

Vectorised Welch’s t (independent groups) or paired t (matched producers) per pixel:

t_vec <- pixel_t_test(signal_a, signal_b) # n_pixels long

t_vec_paired <- pixel_t_test(signal_a, signal_b,

paired = TRUE)Returns a numeric vector of t-values, length n_pixels

(or sum(mask) if a mask is supplied). The function serves

as an intermediate building block for rel_cluster_test()

and is not intended as a standalone inferential test (no FWER control at

the per-pixel level). For paired mode, the two matrices must have

identical ncol and matching column names.

8.2 rel_cluster_test()

Background. The cluster-based permutation test (Maris & Oostenveld, 2007) treats the spatial coherence of CI signal as a feature, not a nuisance. The procedure is in three conceptual steps:

- Compute the per-pixel t-statistic (as in §8.1), and threshold its

absolute value at some cutoff (e.g.,

|t| > 2). Each pixel is either supra-threshold or not. - Group the supra-threshold pixels into spatially contiguous

clusters using a neighbour rule. The default in

rcisignalis 4-connectivity: a pixel’s neighbours are the four pixels sharing an edge with it (up, down, left, right). 8-connectivity (which also counts diagonal neighbours) tends to merge things that only touch at a corner, producing larger and fewer clusters; 4-connectivity is the conservative default and is the one used here. - Score each cluster by its mass: the sum of the t-values inside the cluster. A small cluster of very high t-values can have the same mass as a large cluster of moderate t-values, and the mass statistic treats them as comparably strong evidence.

The hard part is calibration: how big a cluster mass would you expect

under the null (the conditions are identical)? The permutation

answer is to randomly relabel which producer is in which condition

(preserving the per-condition N), recompute the per-pixel t

and the maximum cluster mass, and repeat thousands of times. The

resulting distribution of maximum cluster masses is the empirical null,

calibrated to your data without distributional assumptions. A

real cluster’s p-value is the fraction of null max-masses that exceed

it. Because every candidate cluster is tested against the

maximum under the null, this controls the family-wise error

rate in the strong sense (Nichols & Holmes, 2002).

A note of self-criticism: the cluster-forming threshold is the one knob without a fully principled choice. Lower thresholds favour broad and diffuse effects; higher thresholds favour focal and intense ones (Smith & Nichols, 2009). When you do not have prior intuition about the spatial scale of your effect, TFCE (below) avoids having to choose.

In code, rel_cluster_test() exposes both methods.

method = "threshold" (default; Maris

& Oostenveld 2007): threshold

|t| > cluster_threshold (default 2.0), label connected

components with 4-connectivity (the conservative choice over

8-connectivity), and use cluster mass (sum of t-values within the

cluster, not pixel count) as the test statistic. The null is built by

stratified label permutation: every permutation preserves

(N_a, N_b) exactly, the pixel-wise t is recomputed on

shuffled labels, and the maximum positive and maximum negative cluster

masses are recorded. A cluster’s p-value is the fraction of null masses

(matching sign) that exceed the observed.

ct <- rel_cluster_test(

signal_a, signal_b,

img_dims = c(256L, 256L),

cluster_threshold = 2.0,

n_permutations = 2000L,

alpha = 0.05,

seed = 1L

)

ct

plot(ct)The result carries $clusters (a data frame with

cluster_id, direction, mass,

size, p_value, significant),

$null_distribution (the $pos and

$neg per-permutation max masses), and integer label

matrices $pos_labels / $neg_labels for

plotting. Maximum-statistic permutation provides FWER control in the

strong sense (Nichols & Holmes 2002).

method = "tfce" (Smith & Nichols

2009): threshold-free cluster enhancement. Instead of picking one

cluster-forming threshold, TFCE walks across many possible thresholds

and adds up, at each pixel, how much spatial support the surrounding

cluster has at each threshold height. Pixels embedded in

strongly-supported clusters end up with high TFCE scores; isolated

pixels with no neighbour support end up with low scores. Per-pixel TFCE

value is the integral over thresholds of size^E x h^H x dh;

positive and negative tails are enhanced separately and recombined with

sign preserved. No free threshold parameter to choose. Per-pixel p-value

=

(sum(null_max_abs_tfce >= |observed_tfce|) + 1) / (n_perm + 1).

ct_tfce <- rel_cluster_test(

signal_a, signal_b,

img_dims = c(256L, 256L),

method = "tfce",

tfce_H = 2.0,

tfce_E = 0.5,

seed = 1L

)Defaults match Smith & Nichols (H = 2.0,

E = 0.5, n_steps = 100). TFCE result carries

$tfce_map, $tfce_pmap,

$tfce_significant_mask instead of $clusters.

Print and plot methods branch on $method.

For a paired design, pass

paired = TRUE; the per-pixel statistic becomes paired t and

the null is built by random sign-flip on per-producer differences (exact

under exchangeability of pair sign).

8.3 rel_dissimilarity()

Background. The Euclidean distance between two CIs is the

square root of the summed squared pixel differences (equivalently, the

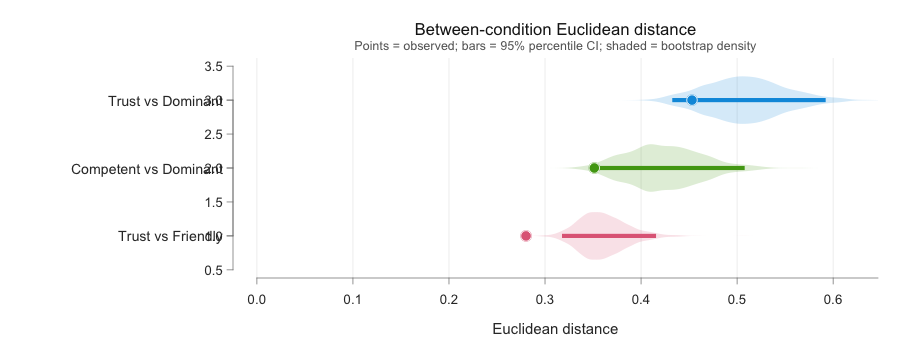

Frobenius norm of the difference matrix). It is a single- number summary

of how far apart two group CIs sit in pixel space. It ignores

where the difference lives and reports only its overall

magnitude. The advantage is simplicity: one number per contrast,

comparable across studies, with a percentile bootstrap CI (resample

producers with replacement, recompute the distance, repeat) for

uncertainty. The trade-off is loss of spatial detail, which is exactly

what rel_cluster_test() (§8.2) is for. Reported together,

the two metrics complement each other: the cluster test localises the

difference, the dissimilarity quantifies its overall size.

A self-critical note: Euclidean distance scales with image size and

with whatever absolute units the CI carries. The

$euclidean_normalised field divides by

sqrt(n_pixels) to make distances comparable across

resolutions, but cross-study comparison still requires care about

scaling conventions and the underlying base image.

In code:

Pair the spatial cluster test with a single overall magnitude summary: Euclidean distance between the two group-mean CIs, with percentile bootstrap CIs.

dr <- rel_dissimilarity(

signal_a, signal_b,

n_boot = 2000L,

ci_level = 0.95,

seed = 1L

)

dr

plot(dr)$euclidean is the raw distance;

$euclidean_normalised is

$euclidean / sqrt(n_pixels), useful for cross-resolution

comparisons. $boot_dist is the full bootstrap distribution;

$ci_dist and $boot_se_dist are the standard

summaries.

The Pearson correlation fields ($correlation,

$boot_cor, $ci_cor, $boot_se_cor)

are kept for compatibility with earlier versions but are no longer

recommended. Two base-subtracted CIs share image-domain spatial

structure (face shape, oval signal support) that pushes their

correlation above zero even when the underlying mental representations

are unrelated; absolute correlation values do not cleanly mean “these

conditions are similar”.

A null = "permutation" argument adds a chance baseline

for the Euclidean distance: stratified condition-label permutation

(between-subjects) or sign-flip on per-producer differences (paired).

When set, the result includes $d_null_p95,

$d_z (z-equivalent effect size), and

$d_ratio.

8.4 run_discriminability()

Orchestrator that runs rel_cluster_test() and

rel_dissimilarity() on a pair of signal matrices:

rep <- run_discriminability(signal_a, signal_b,

img_dims = c(256L, 256L),

cluster_threshold = 2.0,

seed = 1L)

rep

plot(rep) # cluster t-map + bootstrap dissimilarity, side by sideplot(rep) is the one-call view of the whole report. The